In a previous post, we learned about some interesting use cases of Testcontainers; now you’ll see how to integrate it into CI systems running within Docker containers themselves.

Integration with OpenShift

If you are using a CI environment that runs itself in Docker container, like Jenkins in the highly recommended OpenDevStack (which is based on RedHat OpenShift, which is in turn based on Kubernetes), you’ll run into some challenges. Your Testcontainers-based tests try to spawn Docker containers of their own within the container the CI environment is running in; this does not work out-of-the-box.

In general, there are two approaches to tackle this problem:

- Docker-in-Docker: By building a custom Docker image of your CI system and providing it with a working Docker daemon. However, this is not advisable for various reasons and should only be used a method of last resort.

- Docker wormhole pattern: By exposing the docker daemon that your CI container was spawned by into said container. Containers started by Testcontainers will then be „siblings“ to your CI container.

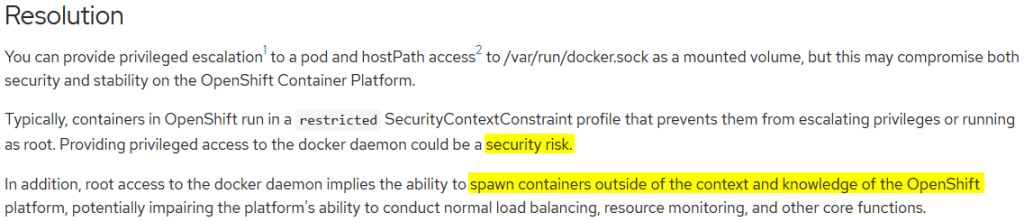

In the following, we’ll discuss the latter approach. Although RedHat warns about security and stability risks, this can be a viable approach if you keep an eye on them.

The docker deamon is listening on the UNIX socket /var/run/docker.sock, which we need to expose into our CI container.

First of, ssh into your OpenShift installation to determine the group id of the docker group that the deamon is running in by issuing a command like getent group:

[docker@minishift ~]$ getent group

...

docker:x:1000:docker

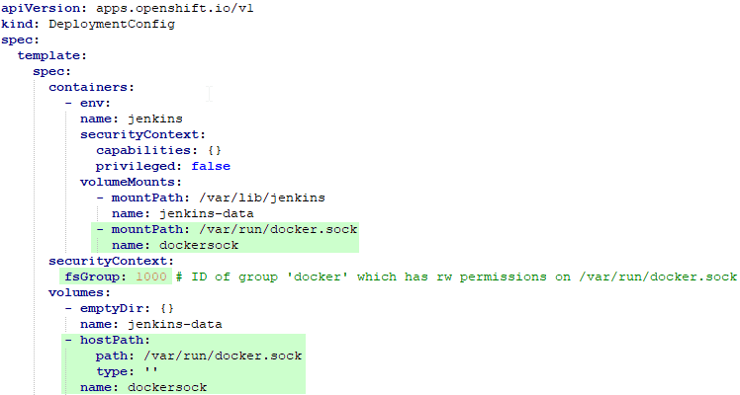

[docker@minishift ~]$In our case, the determined group id is 1000. Then, log in to your OpenShift web interface and edit the deployment config of your CI system (Jenkins in this case) by adding these lines (marked green) to it:

This instructs OpenShift to run the Jenkins container within the group of the docker deamon and to expose its socket by mounting it into the container.

Normally, containers in OpenShift run with restricted privileges. In order to allow mounting volumes (or sockets, in this case) of the host into the container, we need to add an appropriate Security Context Constraint (SCC) that includes the hostPath plugin to the user that runs the Jenkins container. We can do this by issuing a command like oc adm policy add-scc-to-user hostaccess jenkins as OpenShift administrator. You could also define your own SCC rather than using hostaccess provided by OpenShift.

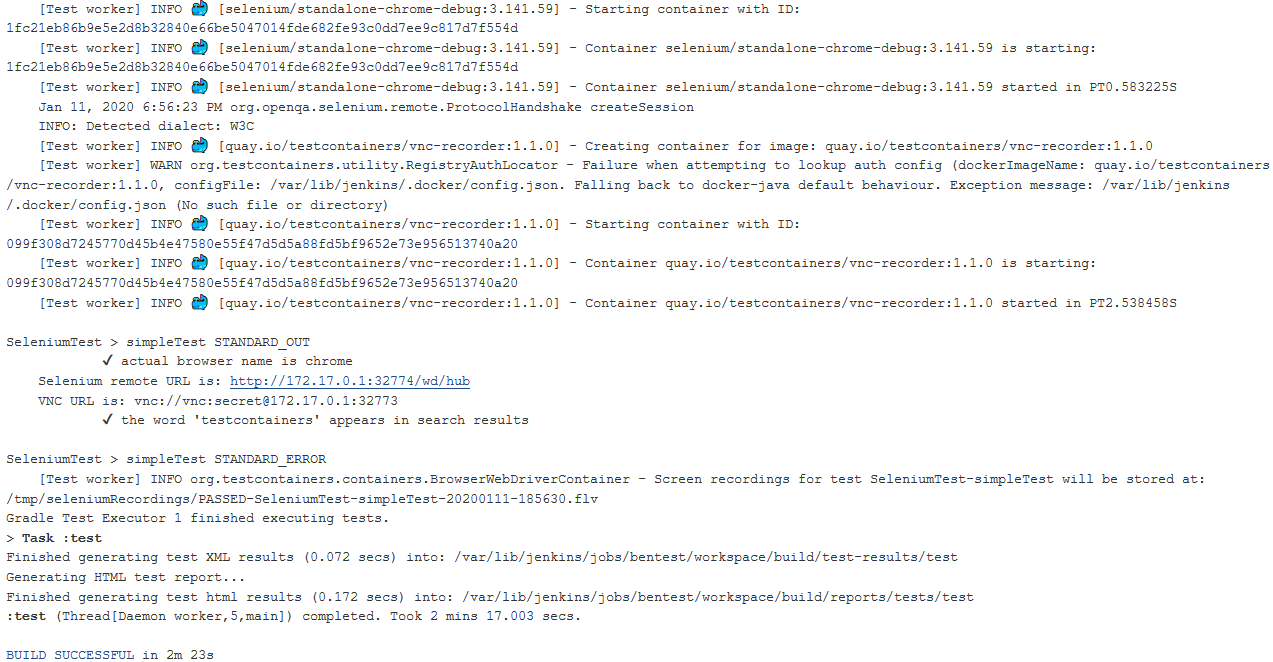

One might think that we were done by now. However, if you try to run your tests at this point, you’ll get a permission denied error upon accessing docker.sock. Why is that? OpenShift runs on CentOS which has SELinux activated. In order to lift its constraints, ssh into your OpenShift installation to download and build selinux-dockersock. With this small SELinux policy added, you should finally see some whales merrily spouting into your Jenkins console:

Happy testing! ðŸ³

Conclusion

Testcontainers simplifies the usage of „real“ services the system under test depends upon by providing convenient methods to start, wire-up, communicate with and exchange data with dockerized applications. The only remaining dependency of the executing system is a working Docker installation. Furthermore, Testcontainers can be run from within Docker images themselves, as might be the case for some continuous integration (CI) systems, albeit extra care has to be taken as shown above.

Naturally, the performance of a Docker container with a complex application starting and shutting down cannot compete with mocks, but „“ if used wisely „“ such tests offer a greater insight into the behavior of the mimicked parts of the productive system, thus inspiring greater confidence in these tests.

Another advantage is the fact that containers always start from the known state of their image, thereby avoiding errors introduced by „leaking state“ as might be the case in a database running in a VM managed manually for example. It follows that any configurative changes to these images have to be performed in code, leading to a „(test) infrastructure as code“ approach with the added benefit of simultaneously serving as documentation.

After much praise, I“™d like to end on a more cautious note. Please keep in mind that we are not removing complexity here, we are just abstracting it away, or as the saying goes:

All problems in computer science can be solved by another level of indirection“¦ except for the problem of too many layers of indirection.

David Wheeler